Table of Contents

What are API keys?

This tutorial was written by Manish Hatwalne, a developer with a knack for demystifying complex concepts and translating "geek speak" into everyday language. Visit Manish's website to see more of his work!

If you're a senior developer or architect, you've likely weighed the OAuth vs. API keys tradeoff many times to balance simplicity against security and convenience against control. But those API security concerns always assumed traditional API clients: web apps, mobile apps, and predictable server-to-server integrations. Agentic AI systems challenge those assumptions.

Unlike traditional API clients, agentic AI systems are autonomous, tool-using, and inherently non-deterministic. They make real-time decisions, chain multiple API calls together, and sometimes take actions their creators never explicitly programmed. When an AI agent can decide to delete your production database or book a flight on your behalf, the stakes of API authentication are high, and accidents happen.

In this article, you'll reexamine the outstanding debate between OAuth and API keys through the lens of agentic AI.

What are API keys?

If you've used paid APIs from services like Claude, OpenAI, or Mailgun, you've already used API keys.

API keys are simple, static credentials; typically long alphanumeric strings that act as both identifier and password rolled into one. They're given by API providers and included in requests, usually as a header or query parameter, to authenticate the caller.

How API keys work

The workflow is straightforward. A developer generates an API key from a provider's dashboard, embeds it in their application, and sends it with every API request:

http

GET /data HTTP/1.1

Host: api.example.com

Authorization: Bearer sk_live_a8f3j29dk3j2d9fj2k3d

Accept: application/jsonThe server validates the key against its database and either grants or denies access. It doesn't involve any redirects, token exchanges, or complexity.

Traditional use cases

API keys have been used in machine-to-machine (M2M) authentication for years, in controlled environments such as internal microservices, CI/CD pipelines, monitoring tools, etc.

Strengths / benefits of API keys

There's a reason API keys remain popular:

Simple to implement and deploy: No complicated flows, no authorization servers, no token management infrastructure.

Minimal overhead: No redirects, no token juggling, no refresh logic.

Useful for low-risk, tightly controlled systems: Where you trust the environment and the code using the key.

For instance, if a script syncs data between two internal databases at 3 a.m., using API keys is perfectly fine.

Limitations of API keys

The following are some shortcomings of API keys:

Long-lived and static by default: API keys have no built-in expiration mechanism. Once issued, a key remains valid until explicitly revoked. How and when it's revoked depends on provider tooling and team discipline.

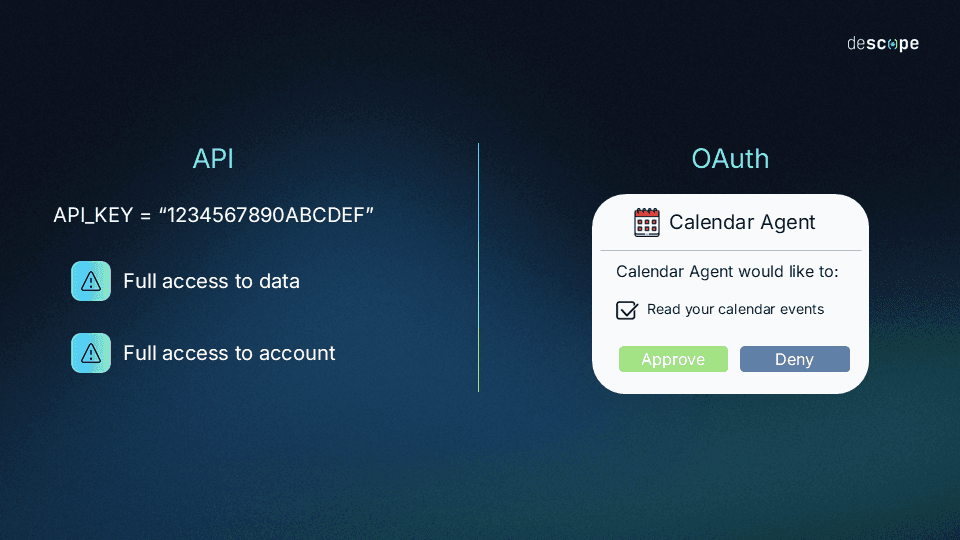

No scopes: API keys have no standardized concept of permissions. When it does exist, access control is defined ad hoc, per provider. The result is inconsistent granularity across services and a lack of interoperability.

Hard to rotate at scale: Rotating keys across dozens of services means coordinating deployments and risking downtime.

No built-in user delegation: API keys represent the application, not a specific user. There's no way to say "this action was taken by Alice".

Limited auditability and traceability: Log entries show the API key was used, but not by whom, why, or in what context.

It's worth noting that individual providers have closed some of these gaps independently. For example:

GitHub supports fine-grained personal access tokens (PATs) with repository-level scoping and expiration.

AWS allows per-key policy for its Key Management Service (KMS).

Anthropic and OpenAI both offer workspace-scoped keys with configurable permissions.

But each of these is a proprietary implementation: different APIs, different defaults, different enforcement. An agentic system that calls tools across five providers encounters five separate permission models with no single standard determining how keys are issued, scoped, rotated, or revoked.

OAuth's value in this context isn't that it can do things API keys theoretically can't, but that it does them consistently, interoperably, and by a shared specification.

What OAuth provides over API keys

If you've ever clicked "Login with Google" or "Login with LinkedIn" on a website, you've seen OAuth in action.

OAuth 2.0 fundamentally rethinks API authentication by separating two concerns that API keys conflate: who you are (authentication) and what you can do (authorization).

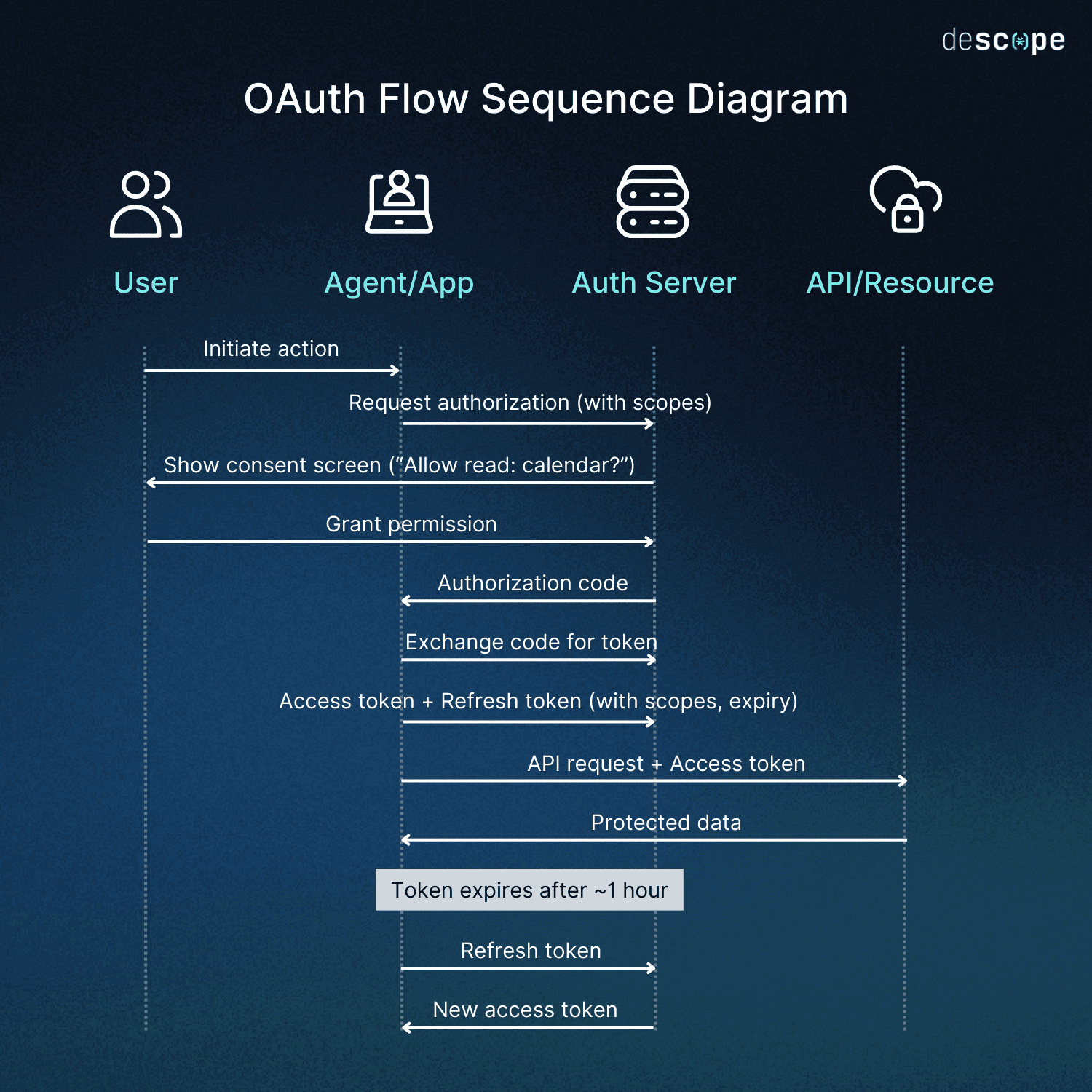

Instead of a single static credential, OAuth uses short-lived access tokens granted through explicit authorization flows. These tokens carry specific permissions (called scopes) that define exactly what actions the bearer can perform.

Here are the key ideas of OAuth that make it more secure inherently:

Separation between authentication and authorization: With API keys, proving your identity and getting permissions are the same thing. OAuth splits these apart. An identity provider verifies who you are, an authorization server decides what you can access, and a resource server validates your token. Each component has a single, well-defined job.

Fine-grained scopes defining permissions: Instead of all-or-nothing access, you request specific scopes like read:calendar or write:events. The authorization server issues a token that carries only those permissions. The API server enforces these boundaries automatically.

A simplified OAuth request looks like this:

http

GET /oauth/authorize?

response_type=code

&client_id=cal_agent_a8f3j29dk3

&redirect_uri=https://myagent.com/callback

&scope=read:calendar+write:events

&state=x8f2k9d3j2a

&code_challenge=E9Melhoa2OwvFrEMTJguCHaoeK1t8URWbuGJSstw-cM

&code_challenge_method=S256

HTTP/1.1

Host: auth.calendar.comToken-based, short-lived, revocable access: Access tokens typically expire within an hour. When they do, the client uses a refresh token to get a new one without user intervention. But here's the key advantage: you can revoke a refresh token instantly, cutting off access without changing client credentials or redeploying code. The blast radius (extent of damage or impact in case of a leak) of a compromised token is limited to minutes, not months.

Standardized token exchange and per-action downscoping: OAuth defines a standardized mechanism for exchanging one token for another with different scopes, audiences, and lifetimes through OAuth Token Exchange (RFC 8693).

This enables a critical pattern for agentic AI systems: an agent can hold a base delegated token, then exchange it at runtime for narrowly scoped, ephemeral tokens tailored to each specific action or tool invocation.

For example:

An agent exchanges its base token for a `calendar:read` token when analyzing schedules

It exchanges again for a refund:create token scoped to a specific transaction

Each exchanged token is audience-restricted to the exact API being called and expires within minutes

This ensures agents always operate with the minimum permissions required at the moment of action, dramatically reducing blast radius and preventing privilege accumulation over time.

API keys lack any standardized, interoperable mechanism for token exchange or dynamic downscoping. Any similar behavior must be custom-built, inconsistent, and difficult to audit.

Ability to represent human intent through consent flows: Before any client gets access, the user sees a consent screen listing exactly what permissions are being requested. They click "Allow" or "Deny". This explicit grant means the client (say: an agent) operates with delegated authority, not stolen credentials. Since the user gives permission, it gets recorded.

The diagram below shows how authentication, scopes, consent, and token lifecycle work together in the OAuth flow:

OAuth benefits for modern architectures

The capabilities of OAuth translate into concrete architectural advantages, especially as systems become more distributed and autonomous.

Delegation model aligns with trust boundaries: OAuth is designed for third-party access. An AI agent accessing your Google Calendar is fundamentally a third party, even if you built it yourself. OAuth's delegation model reflects this reality. The agent doesn't hold your password. It holds a limited access that you approved.

Granular access provides least privilege by default: Scopes enforce the principle of least privilege automatically. An agent building calendar summaries gets

read:calendaronly. An agent scheduling meetings getsread:calendarandwrite:events. An agent never getsdelete:accountunless you explicitly grant it.Revocation and observability of token usage: Every access token is traceable to a specific authorization grant, user, scope set, and timestamp. Your authorization server logs show exactly which agent did what, when, and under whose authority. If an agent misbehaves, you can revoke its token without affecting other systems or users.

Better fit for ecosystems with distributed autonomy and risk: When multiple agents operate semi-independently, each with different trust levels and capabilities, OAuth provides the identity and permission infrastructure to manage that complexity safely. You're not sharing one master key across a dozen autonomous systems. You're issuing narrowly scoped, individually revocable tokens.

Agentic AI: a new category of API client

Traditional API clients follow a script. A cron job runs at midnight, calls API endpoints sequentially, processes the data, and exits. A mobile app waits for user taps, makes predictable API calls, and displays results. The behavior is deterministic.

Agentic AI systems are largely autonomous, operating on goals rather than hardcoded instructions. They learn from context, adapt to situations, and generate action sequences you never explicitly programmed.

Consider a customer support agent built with an LLM. A user asks, "Why was I charged twice last month?" The agent doesn't follow a fixed script. Instead, it:

Reasons that it needs to check billing history.

Calls your payments API to retrieve customer transactions.

Analyzes the results and notices a duplicate charge.

Decides to check your refunds API to see if it was already addressed.

Determines it wasn't refunded.

Calls your refunds API to initiate a refund.

Responds to the user with confirmation.

As a developer, you don't program steps 1 through 7 explicitly. Instead, you give the agent access to tools (the APIs), describe what each tool does, and let it figure out the sequence. The agent chains multiple API calls, makes contextual decisions, and takes financial action autonomously.

This is fundamentally different from a webhook that processes a payment and triggers a predefined workflow. The agent's behavior emerges from its training and the situation at hand. It operates beyond simple scripts or predictable machine-to-machine workflows.

Why these characteristics matter for auth

This autonomy creates new security requirements that API keys were never designed to handle:

Need for granular, action-bound permissions: When an AI agent can dynamically choose which APIs to call, you cannot give it blanket access to everything. You need to constrain it to specific actions: read billing data, yes; initiate refunds up to $100 USD, maybe; delete customer accounts, absolutely not.

Need to audit and trace agent actions at fine resolution: When something goes wrong, you need logs that show exactly what the agent did, why, and under whose authority. "API key used at 3:47 PM" is not enough. You need "Agent-CustomerSupport-v2 initiated $47 USD refund for user Alice under scope refunds:create:limited at 3:47 PM."

Need for safe delegation of user authority without exposing secrets: The agent acts on behalf of users, but it should never hold their passwords or master credentials. It needs delegated, scoped authority that represents user intent.

Need for revocable, time-bounded permissions to reduce blast radius: If an agent starts misbehaving for any reason, you should be able to cut off its access immediately with instant revocation.

API keys can't deliver this level of control. You need OAuth for its granular scope enforcement and built-in token revocation.

Comparing API keys vs. OAuth in agentic AI scenarios

Let's look at how a few real-life scenarios could use API Keys vs. OAuth.

Scenario 1: an agent managing a user's calendar

Consider an AI assistant that helps users manage their work calendar by reading events, finding conflicts, and suggesting optimal meeting times.

With API keys

The agent uses a static key with full calendar access:

python

import requests

API_KEY = "sk_live_calendar_full_access_key"

headers = {"Authorization": f"Bearer {API_KEY}"}

# Agent can read, write, delete anything

events = requests.get("https://api.calendar.com/events", headers=headers)

requests.delete("https://api.calendar.com/events/important-meeting", headers=headers)

The problems are serious. The API key grants complete access to all calendar operations. The agent can delete events, modify attendees, or clear entire calendars, even though it only needs to read events. If the key leaks through logs, prompt injection, or model output, attackers gain full calendar control. There's no user consent, no audit trail showing which specific agent performed which action, and no way to distinguish agent requests from legitimate server operations in logs.

With OAuth

The user explicitly grants limited access through a consent flow:

python

from requests_oauthlib import OAuth2Session

# Agent requests only read access

oauth = OAuth2Session(

client_id='calendar_agent_client',

scope=['read:calendar']

)

# User sees: "Calendar Agent wants to: Read your calendar events"

# User clicks "Allow"

# Agent receives a token limited to reading

token = oauth.fetch_token(

'https://auth.calendar.com/token',

authorization_response=callback_url

)

# This works

events = oauth.get('https://api.calendar.com/events')

# This fails - token doesn't have write:calendar scope

oauth.post('https://api.calendar.com/events', json={...}) # 403 ForbiddenThe token expires after an hour. If the agent misbehaves, the user revokes access instantly from their security settings. Audit logs clearly show: "calendar_agent_client accessed user Alice's calendar with scope read:calendar at 2:34 PM". The blast radius is contained.

Scenario 2: an agent automating developer tooling

Now let's consider an AI agent that helps developers by automatically creating GitHub issues from Slack conversations, assigning them to the right team members, and updating project boards.

With API keys

A GitHub personal access token with full repo access sits in the agent's configuration:

python

GITHUB_TOKEN = "ghp_full_repo_access_token"

headers = {"Authorization": f"token {GITHUB_TOKEN}"}

# Agent can do anything

requests.post("https://api.github.com/repos/company/prod/issues",

headers=headers, json={"title": "Bug found"})

# Including destructive operations it shouldn't

requests.delete("https://api.github.com/repos/company/prod", headers=headers)The static token allows the agent to delete repositories, modify branch protection rules, or leak source code. There's no time limit, no scope restriction, and no clear audit trail linking actions back to the specific agent instance.

With OAuth

The agent requests narrowly scoped access:

python

# Agent requests specific permissions

oauth = OAuth2Session(

client_id='dev_agent_client',

scope=['repo:issues:write', 'project:read']

)

# Token is bound to specific repos and actions

token = oauth.fetch_token(...)

# Agent can create issues

oauth.post('https://api.github.com/repos/company/prod/issues',

json={"title": "Bug found"})

# But cannot delete repos or access code

oauth.delete('https://api.github.com/repos/company/prod') # 403 ForbiddenOAuth scopes restrict the agent to creating issues and reading project boards. The token expires after an hour and can be revoked if the agent starts creating spam issues. GitHub's audit log shows exactly which agent, acting under whose authority, performed each action.

Scenario 3: multi-agent workflows

Now consider an agentic system where multiple specialized agents collaborate: one agent researches competitors, another drafts marketing copy, and a third publishes content to your CMS.

With API keys

All agents share the same CMS API key:

python

SHARED_CMS_KEY = "cms_full_access_key"

# Research agent (should only read)

research_agent.set_credentials(SHARED_CMS_KEY)

# Writing agent (should read and draft)

writing_agent.set_credentials(SHARED_CMS_KEY)

# Publishing agent (should publish drafts)

publishing_agent.set_credentials(SHARED_CMS_KEY)

# All have identical, full accessThis creates multiple problems. The research agent can accidentally publish content. The writing agent can delete published articles. If any agent is compromised, all three are compromised because they share credentials. Server logs show "CMS key used" but not which agent or why. There's no way to represent each agent's actual role and permissions.

With OAuth

Each agent gets its own identity and scoped token:

python

# Research agent - read-only access

research_oauth = OAuth2Session(

client_id='research_agent',

scope=['content:read']

)

# Writing agent - read and draft

writing_oauth = OAuth2Session(

client_id='writing_agent',

scope=['content:read', 'content:draft']

)

# Publishing agent - publish only

publishing_oauth = OAuth2Session(

client_id='publishing_agent',

scope=['content:publish']

)Each agent operates with least privilege. The research agent cannot modify content. The writing agent cannot publish. The publishing agent cannot delete. If the writing agent is compromised through prompt injection, the attacker gains only drafting capabilities, not publishing or deletion. Audit logs clearly attribute each action to a specific agent identity with specific permissions.

Why the Model Context Protocol mandates OAuth

The Model Context Protocol (MCP) is an open standard defining how AI systems should securely interact with external tools and data sources. MCP provides a structured way for agents to discover available tools, understand their capabilities, and invoke them safely.

While MCP makes authorization optional for low-risk scenarios, it mandates OAuth 2.1 when agents access user data or act on behalf of users, precisely the scenarios where autonomous agents make real-time decisions about tools and actions.

MCP encourages OAuth 2.1 for the ability for every tool to explicitly define its required access scopes. When an agent wants to use a tool, it must authenticate using OAuth flows designed for agents running on servers without a traditional user interface (i.e., headless). This typically means device flow (where users authorize on a separate device by entering a code) or authorization flows with Proof Key for Code Exchange (PKCE): a security extension that prevents authorization code theft by requiring a secret only the legitimate client knows. These flows ensure users grant explicit consent for the specific permissions each tool needs.

MCP requires auditable, revocable, and safe delegation of authority. Each tool invocation is traceable to a specific token, scope set, and user consent. When an agent misbehaves or a tool becomes compromised, admins can revoke access immediately without affecting other tools or redeploying code.

API keys lack the granularity, traceability, and revocation mechanisms MCP's security model requires. By mandating OAuth, MCP ensures agentic systems operate within clearly defined, user-controlled permission boundaries.

When API keys still make sense

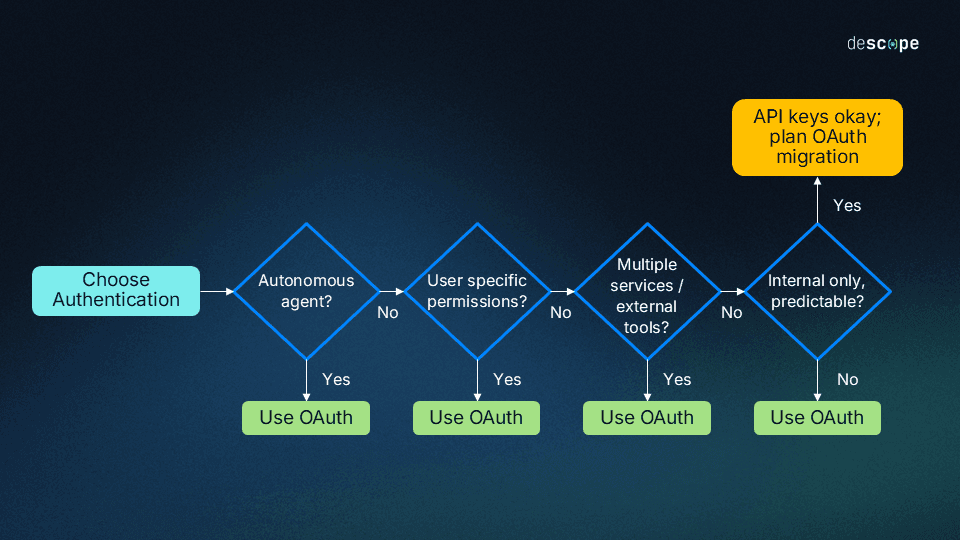

OAuth isn't always the perfect choice. There are legitimate scenarios where API keys remain a more practical choice.

Non-agentic M2M automation: Internal microservice calls on private networks don't need OAuth's complexity. When Service A calls Service B within your infrastructure, both under your complete control, a rotated API key works fine. The same applies to simple backend processes with predictable behavior (i.e., nightly ETL jobs, monitoring scripts, deployment pipelines). These systems follow deterministic logic with no autonomous decision-making.

Low-risk or high-control environments: Internal admin tools accessible only from corporate networks, behind VPNs, with IP restrictions and vault-managed secrets, can safely use API keys. The infrastructure itself enforces security boundaries.

Startups or prototypes: Early-stage products validating product-market fit can use API keys to move fast. However, OAuth migration might be required for scaling.

Did you know? Major AI providers like OpenAI and Anthropic still use API keys for millions of users because they're authenticating developer applications, not autonomous agents acting on behalf of end users. When those applications need to perform user-specific actions with granular permissions, OAuth becomes necessary at that layer.

Quick implementation guidance and best practices

Use this decision tree to choose authentication based on your requirements:

For OAuth in agentic AI systems

Implement narrow scopes: Avoid wildcard permissions like

admin:*orfull_access. Define granular scopes that match actual operations:calendar:read,issues:create,content:draft.Prefer short-lived access tokens with automatic refresh: Set access token expiration to one hour or less. Use refresh tokens to obtain new access tokens without user intervention.

Require explicit user consent flows: Users must see and approve exactly what permissions they're granting.

Bind agent identity to token issuance: Each agent instance should have its own client ID. This allows per-agent revocation and helpful audit trails.

Enable complete audit logging: Log every token issuance, refresh, and API call with agent identity, scope, timestamp, and user context.

Use Authorization Code Flow with PKCE and Device Code Flow: For agents running on servers or in automated environments, these flows provide secure authentication without traditional browser redirects.

For API keys

Rotate regularly and automatically: Implement 90-day rotation schedules with zero-downtime deployment strategies.

Store keys in secure vaults: Use dedicated secret management systems like Google Secrets Manager or similar.

Apply API rate limits and behavioral analytics: Monitor for unusual patterns that might indicate compromise.

Restrict IP addresses or environments: Limit key usage to known networks or infrastructure.

Transition to OAuth once agents enter the ecosystem: Plan this migration before adding autonomous capabilities.

Conclusion

Agentic AI is transforming API authentication requirements. The simplicity of API keys comes at a cost: no granular permissions, no revocation, and limited auditability. OAuth provides the delegation model, scoped access, and security controls that autonomous systems demand. With protocols like MCP mandating OAuth for tool access, the industry is converging on a clear standard for intelligent, autonomous systems.

OAuth implementation is becoming necessary for AI agents. Descope provides purpose-built authentication for agentic AI systems, with pre-configured OAuth flows, granular scope management, and agent identity infrastructure that scales from prototype to production.