Table of Contents

Setup and first impressions

This tutorial was written by Manish Hatwalne, a developer with a knack for demystifying complex concepts and translating "geek speak" into everyday language. Visit Manish's website to see more of his work!

AI coding assistants have split into two distinct philosophies. Some stay close to your editor, offering suggestions and edits without disrupting your flow. Others act more like agents, reasoning across your entire codebase before touching a single file. Gemini Code Assist and Claude Code are actually on opposite ends of that spectrum, which makes this a more interesting comparison.

Gemini Code Assist is Google's IDE-native assistant, deeply integrated with VS Code and JetBrains, with a generous free tier. Claude Code started as a terminal-native agent and has since grown into a decent VS Code extension. However, as of April 2026, Gemini Code Assist has a free tier, and Claude Code does not. Since AI-assisted coding can use a lot of tokens, that fact is a real differentiator, and it deserves to be called out early rather than buried in a comparison table.

This is the third article in a series comparing Claude Code with other AI coding tools. Earlier comparisons cover Claude Code stacked up against OpenAI Codex, and against GitHub Copilot. As before, both tools get the same starting point: a minimal FastAPI app with no authentication and no tests. Same repo, same prompt, same acceptance criteria.

Setup and first impressions

This comparison uses VS Code extensions to test both tools. According to the last Stack Overflow survey, over 75% of developers use it as their primary IDE, so it's the most honest testing ground. As for models, Gemini Code Assist's free tier runs on `Gemini 3 Flash Preview` (“Pro” version results are similar, not significantly better), while Claude Code defaults to `Claude Sonnet 4.6`. Claude Code leads adoption with over 10.1 million VS Code installs as of April 2026, compared to Gemini Code Assist's 3.7 million (despite the free tier).

Both extensions install with a single click, but Claude Code adds one extra step: you need to install its native binary.

curl -fsSL https://claude.ai/install.sh | bashThis extra step exists because the VS Code extension is essentially just a wrapper (Gemini integrates more deeply). The actual work happens in the binary, which runs your code, reads project files, and executes commands. The extension simply brings that capability into your editor through a sidebar interface, complete with conversation history, tabbed workflows, plan previews before applying changes, and inline diffs for side-by-side comparisons. If you’d rather stay in the terminal, you can use the CLI directly as well.

Getting started: auth and first impressions

Getting started with Gemini is straightforward: sign in with a personal Google account (note that some organizational accounts may not be eligible), and you're in. The VS Code extension surfaces one important disclaimer upfront: your code and conversations may be used to improve Google AI, and review its output carefully:

As noted in previous articles, getting started with Claude Code takes a bit more effort. It assumes you have either a Claude subscription or Anthropic Console access. If you only have an API key without Console access, you'll likely hit an authentication error that looks deceptively like a misconfiguration. This is a known issue with a documented workaround.

Both tools open as a familiar chat interface in VS Code, though Gemini's panel sits on the left side of the editor while Claude Code's appears on the right (similar to Codex and Copilot).

Below the chat input, Gemini shows a Context items section displaying which file is in scope (main.py), with an option to add more, and a toggle to switch to Agent mode (Preview). Claude Code offers a mode switcher with Ask before edits, Edit automatically, and Plan mode, paired with the Effort slider (Low to Max). Claude is also rolling out an auto mode.

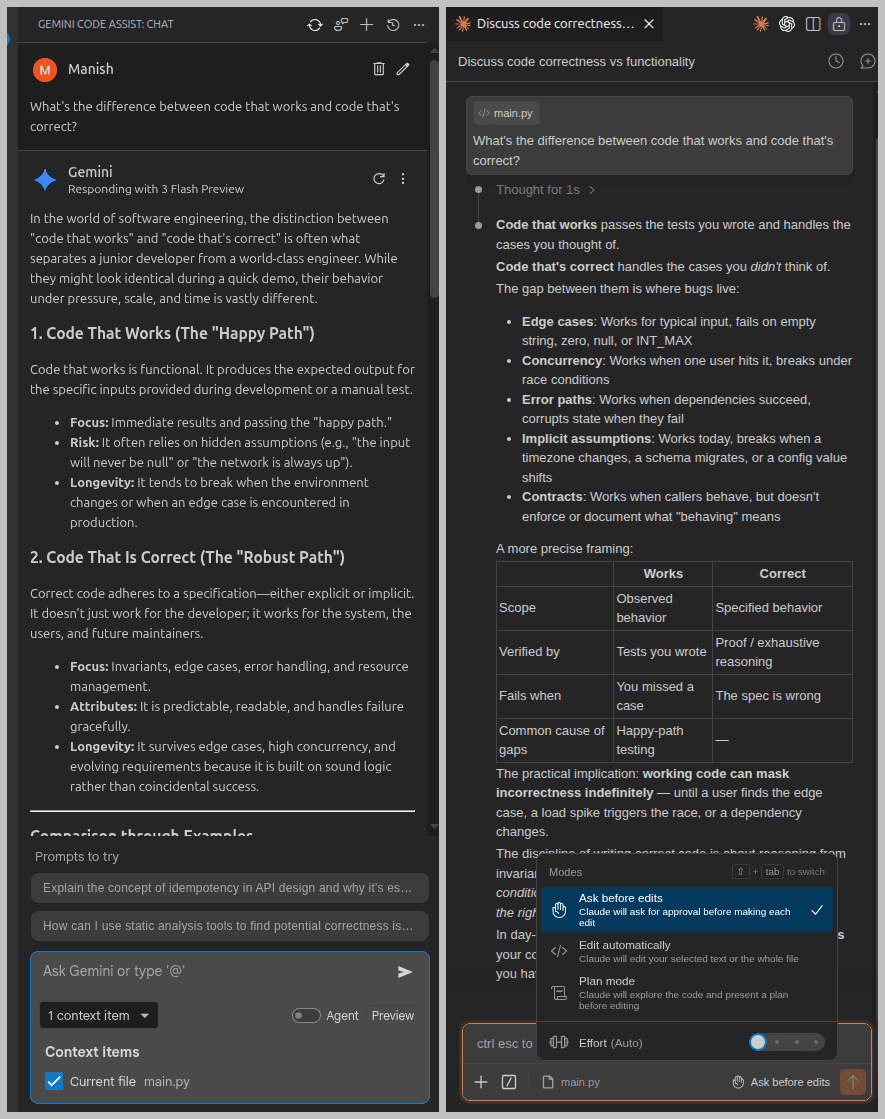

To get a feel for each tool's reasoning style, both were given the same open-ended prompt:

Prompt:

-------

What's the difference between code that works and code that's correct?Gemini opens with a junior vs. world-class engineer framing, then builds a clean two-column mental model: "Happy Path" vs. "Robust Path." It's well-structured and accessible, but stays within familiar software engineering vocabulary.

Claude Code skips the preamble and leads with a sharp, memorable distinction: working code handles the cases you thought of, correct code handles the ones you didn't. It gets specific fast, grounding the concept in concrete failure modes like race conditions, timezone shifts, and implicit contracts, before wrapping up with a comparison table.

Comparing Gemini and Claude Code for JWT auth

As with the earlier comparisons, the starting point for both tools was the same: a basic FastAPI app with three dummy users and a SQLite database. No authentication, no password column, no tests. Both received this identical prompt:

Prompt:

-------

This FastAPI app has no authentication. Add JWT-based auth: a /login endpoint that returns an access token, a /me route protected by a valid token, and a /refresh endpoint. Use bcrypt for password hashing and python-jose for JWT signing. Add pytest tests covering all three endpoints.The library choices are intentional. Without this, most AI tools tend to reach for passlib, which hasn't shipped a release since 2020 and breaks with newer bcrypt versions. Pinning bcrypt and python-jose explicitly cuts that ambiguity before it becomes a debugging session.

JWT auth with Gemini Code Assist

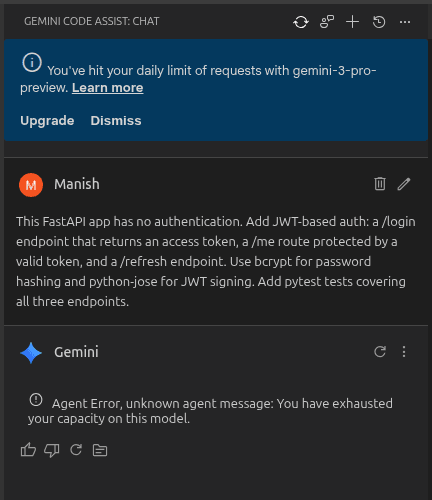

Gemini Code Assist has an Agent mode (Preview), which seemed like the natural choice for a multi-file task. It wasn't. The very first prompt failed with:

Agent Error, unknown agent message: You have exhausted your capacity on this model.

Agent mode sends multiple requests under the hood, and it doesn't appear to be fully functional in preview yet. Switching to standard chat mode worked without issues.

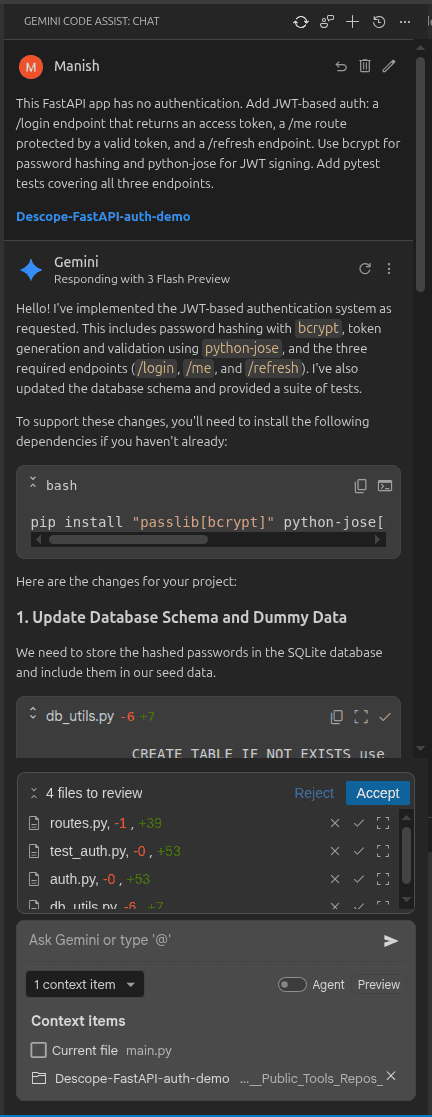

With the FastAPI project loaded as context, Gemini got straight to work on the JWT implementation. It didn't ask any clarifying questions and notably, showed no status messages while working. When done, it displayed this message along with a summary of its changes:

Gemini:

------------

Hello! I've implemented the JWT-based authentication system as requested. This includes password hashing with bcrypt, token generation and validation using python-jose, and the three required endpoints (/login, /me, and /refresh). I've also updated the database schema and provided a suite of tests.For the authentication logic, Gemini created a new auth.py file for password hashing and token operations. However, it added all three new routes (/login, /me, /refresh) directly into the existing routers.py, and added a hashed_password column to the user_profiles table in db_utils.py. The schema change is the right call, though no migration code was included for existing databases.

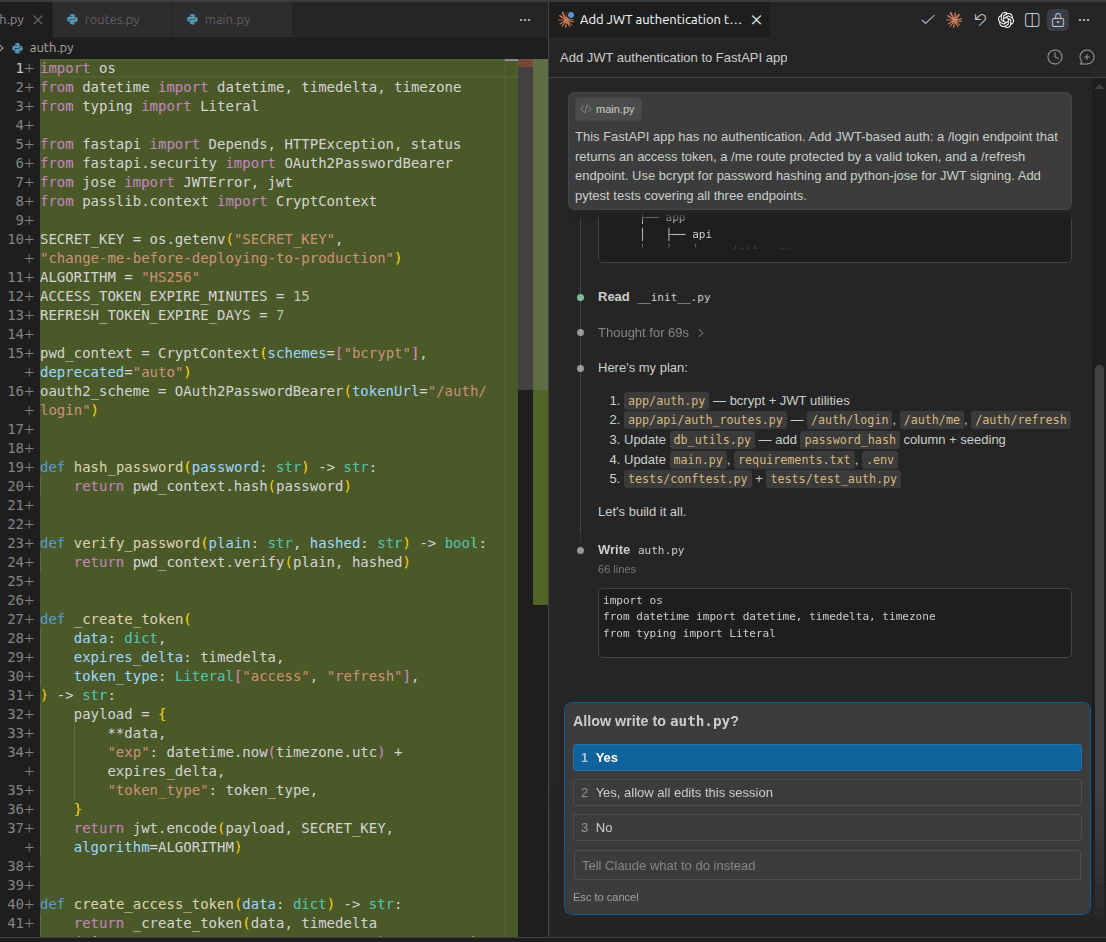

Here's what the initial session looked like:

Overall, Gemini touched four files. It created auth.py and test_auth.py, and modified db_utils.py and routers.py. By default, it presents all changes for review before applying them. As seen in the screenshot, you can "accept" everything at once or review changes file by file, which is a sensible default for developers who want to control what goes into their codebase.

Coding observations and problems

Gemini completed the implementation in around six minutes. It did not update requirements.txt, instead suggested the new dependencies be installed manually:

Gemini:

------------

To support these changes, you'll need to install the following dependencies if you haven't already:

> pip install "passlib[bcrypt]" python-jose[cryptography] python-multipart pytest httpxIt also didn't run any of the tests it generated.

Test suite

Before running any tests, the SQLite database had to be created from scratch since Gemini hadn't included migration code for its schema changes.

Gemini wrote four tests in test_auth.py. Running them manually revealed three failures:

$ pytest -v

================================== test session starts ==================================

collected 4 items

app/api/test_auth.py::test_login_success FAILED [ 25%]

app/api/test_auth.py::test_login_failure PASSED [ 50%]

app/api/test_auth.py::test_me_protected FAILED [ 75%]

app/api/test_auth.py::test_refresh_token FAILED [100%]

================================== short test summary info ==================================

FAILED app/api/test_auth.py::test_login_success - assert 401 == 200

FAILED app/api/test_auth.py::test_me_protected - KeyError: 'access_token'

FAILED app/api/test_auth.py::test_refresh_token - KeyError: 'refresh_token'

================================== 3 failed, 1 passed, 1 warning in 1.31s ==================================Deeper examination of Gemini’s code uncovered this root cause: Gemini had hardcoded password hashes for the seeded users in db_utils.py, and those hashes were incorrect. As a result, pwd_context.verify(plain_password, hashed_password) failed to verify the correct password (password123) at login.

# ---------->>> Original (incorrect), hardcoded password hash for "password123" by Gemini Code Assist

{"name": "Manish", "email": "manish@example.com", "bio": "Loves building stuff and writing.", "hashed_password": "$2b$12$EixZaYVK1fsbw1ZfbX3OXePaWxn96p36WQoeG6L6s57OT697.t95O"}, # password123

{"name": "Jakkie", "email": "jakkie@example.com", "bio": "Managing many responsibilities", "hashed_password": "$2b$12$EixZaYVK1fsbw1ZfbX3OXePaWxn96p36WQoeG6L6s57OT697.t95O"},

{"name": "Kirstin", "email": "kirstin@example.com", "bio": "Excellent editor", "hashed_password": "$2b$12$EixZaYVK1fsbw1ZfbX3OXePaWxn96p36WQoeG6L6s57OT697.t95O"},This required a manual fix, replacing the hardcoded hashes with proper hashing calls:

# ---------->>> Manually corrected hashing code to ensure the password hash is valid and corresponds to "password123"

{"name": "Manish", "email": "manish@example.com", "bio": "Loves building stuff and writing.", "hashed_password": get_password_hash("password123")}, # password123

{"name": "Jakkie", "email": "jakkie@example.com", "bio": "Managing many responsibilities", "hashed_password": get_password_hash("password123")},

{"name": "Kirstin", "email": "kirstin@example.com", "bio": "Excellent editor", "hashed_password": get_password_hash("password123")},With the fix applied and the database recreated, all four tests passed. There were some deprecation warnings, but no serious red flags.

$ pytest -v

================================== test session starts ==================================

collected 4 items

app/api/test_auth.py::test_login_success PASSED [ 25%]

app/api/test_auth.py::test_login_failure PASSED [ 50%]

app/api/test_auth.py::test_me_protected PASSED [ 75%]

app/api/test_auth.py::test_refresh_token PASSED [100%]

================================== 4 passed in 1.96s ==================================Manual cURL verification confirmed that the rest of Gemini's authentication code was correct. The /login endpoint accepts credentials as form data, as required by the OAuth2 spec.

There was one serious issue: the /me endpoint returned the hashed password in its response:

curl -X 'POST' \

'http://127.0.0.1:8000/login' \

-H 'accept: application/json' \

-H 'Content-Type: application/x-www-form-urlencoded' \

-d 'grant_type=password&username=manish%40example.com&password=password123'

{"access_token": "eyJhbGci....", "refresh_token": "eyJhbGciO....", "token_type": "bearer"}

$ curl -X 'GET' \

'http://127.0.0.1:8000/me' \

-H 'accept: application/json' \

-H 'Authorization: Bearer eyJhbGci....'

{"id":1,"name":"Manish","email":"manish@example.com","bio":"Loves building stuff and writing.", "hashed_password":"<revealed-hash-password>"}JWT auth with Claude Code

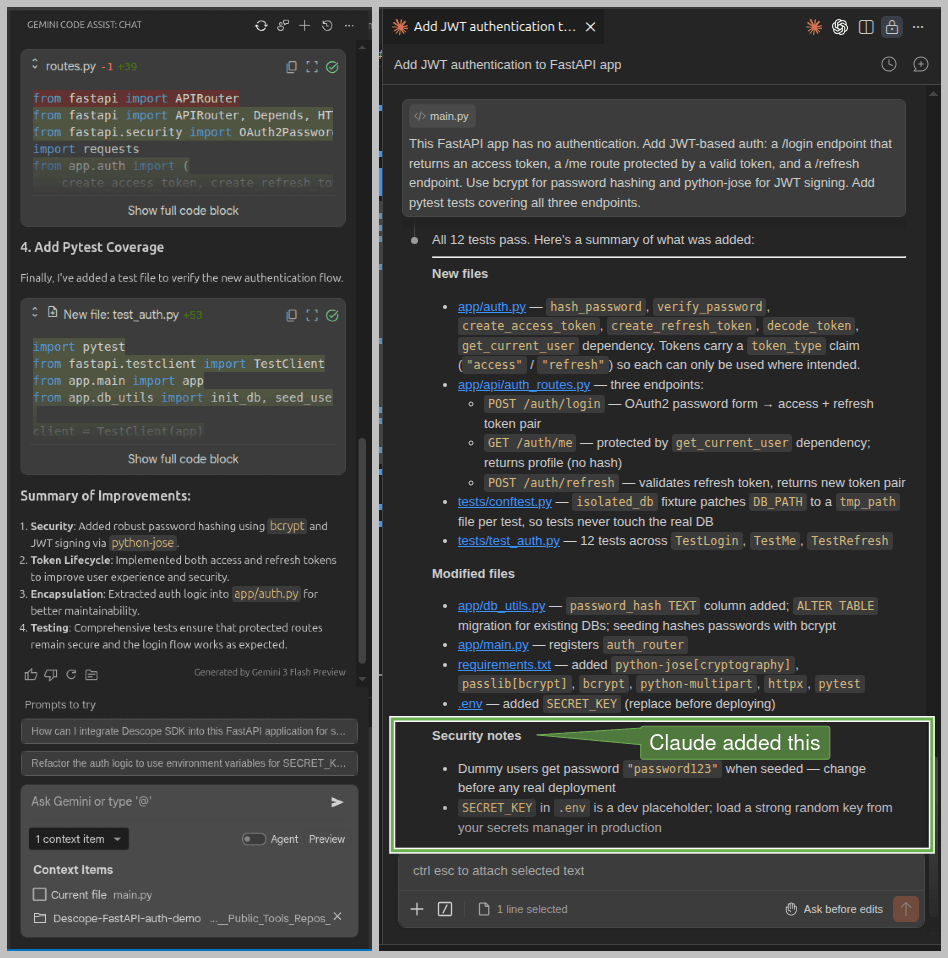

As covered in the previous two articles, Claude Code scans the entire codebase first, lays out a plan, then asks for confirmation before making any changes. True to form, it asked permission before creating auth.py, with the option to approve that single edit or allow all edits for the session. It also updated requirements.txt and ran a bash command to install the new dependencies.

Unlike Gemini, Claude Code displayed all useful notifications as it worked through the codebase.

Coding observations and issues

Claude Code delivered a complete, working implementation with passing tests in under five minutes. It factored authentication logic into a dedicated auth.py module, introduced three new routes, and wired them into the main application:

app.include_router(auth_router)It also defined a separate auth_router with its own namespace, grouping all auth endpoints under /auth. Routes like /login, /me, and /refresh become /auth/login, /auth/me, and /auth/refresh, keeping them cleanly separated from unrelated endpoints.

Gemini skipped namespacing and added everything directly into the existing routes.py. This is okay for small projects, but it gets unwieldy as the codebase grows. Claude Code's structure is more scalable and maintainable.

The authentication flow worked correctly on the first pass, with no fixes required.

Test suite

Gemini generated four tests; Claude Code gave 12. The difference isn't just in count but in structure and coverage.

Claude Code organized its tests using pytest fixtures defined in a dedicated conftest.py, keeping shared setup (database initialization, authentication headers, token creation) cleanly reusable across test cases. Gemini defined its fixture within the same test file, primarily for database setup.

Here's the full test run from Claude Code:

(venv) $ pytest -v

===================================== test session starts ====================================

collected 12 items

tests/test_auth.py::TestLogin::test_success_returns_both_tokens PASSED [ 8%]

tests/test_auth.py::TestLogin::test_wrong_password_is_401 PASSED [ 16%]

tests/test_auth.py::TestLogin::test_unknown_email_is_401 PASSED [ 25%]

tests/test_auth.py::TestLogin::test_missing_credentials_is_422 PASSED [ 33%]

tests/test_auth.py::TestMe::test_returns_profile_with_valid_token PASSED [ 41%]

tests/test_auth.py::TestMe::test_no_token_is_401 PASSED [ 50%]

tests/test_auth.py::TestMe::test_malformed_token_is_401 PASSED [ 58%]

tests/test_auth.py::TestMe::test_refresh_token_rejected_as_access_token PASSED [ 66%]

tests/test_auth.py::TestRefresh::test_returns_new_token_pair PASSED [ 75%]

tests/test_auth.py::TestRefresh::test_new_access_token_is_usable PASSED [ 83%]

tests/test_auth.py::TestRefresh::test_access_token_rejected_as_refresh_token PASSED [ 91%]

tests/test_auth.py::TestRefresh::test_invalid_refresh_token_is_401 PASSED [100%]

===================================== 12 passed in 4.51s =====================================These tests go beyond the obvious. For example, test_refresh_token_rejected_as_access_token and test_access_token_rejected_as_refresh_token explicitly enforce that the two token types cannot be used interchangeably, a subtle but important security boundary that Gemini (and other tools evaluated in this series) didn't validate.

Manual cURL verification confirmed everything worked as expected. Claude Code also follows the OAuth2 spec, accepting login credentials as form data:

$ curl -X 'POST' \

'http://127.0.0.1:8000/auth/login' \

-H 'accept: application/json' \

-H 'Content-Type: application/x-www-form-urlencoded' \

-d 'grant_type=password&username=manish%40example.com&password=password123'

{"access_token":"eyJhbGci....", "refresh_token":"eyJhbGciO....","token_type":"bearer"}

$ curl -X 'GET' \

'http://127.0.0.1:8000/auth/me' \

-H 'accept: application/json' \

-H 'Authorization: Bearer eyJhbGci....'

{"id":1,"name":"Manish","email":"manish@example.com","bio":"Loves building stuff and writing."}Unlike Gemini, the /me endpoint avoided exposing the sensitive field, hashed_password, which is the correct and secure approach.

Code quality and security awareness

Looking at the actual implementations makes the differences in code quality and security practices concrete. Here's how they compare.

Code quality with Gemini Code Assist

Gemini's implementation partially worked, but several issues would cause real problems in production.

With Gemini, the /me endpoint leaks hashed_password. Here's the route Gemini produced:

@router.get("/me")

def read_users_me(current_user: dict = Depends(get_current_user)):

return current_user

def get_current_user(token: str = Depends(oauth2_scheme)):

...

user = get_user_by_email(email)

if user is None:

raise credentials_exception

return dict(user)The get_current_user(...) function fetches the full user row and returns it as a dict, which read_users_me sends directly to the client. Since user_profiles includes hashed_password, that field ends up in the response. This is a serious security issue; even a bcrypt hash gives an attacker a target for offline cracking, and its presence in the response indicates no filtering was applied to the API's response.

No database migration for the new column. Gemini correctly added a hashed_password column to user_profiles in db_utils.py, but included no migration logic for existing rows. That leaves the developer to either write an ALTER TABLE migration manually or recreate the database from scratch. That's a serious flaw in its database handling.

Three out of four tests failed. Gemini wrote four tests, but three failed because the seed data contained incorrectly hashed passwords. As a result, verify_password(...) always returned False for seeded users. The tests were checking the right things; the data they depended on was just broken. This is difficult to debug since hashing errors are not obvious. Gemini also didn't run any tests as part of its implementation.

Missing requirements.txt update and no dependency installation. Gemini suggested the new packages but didn't update requirements.txt or run any install commands. Anyone cloning the repo would hit import errors immediately. It's a small omission with an outsized impact on any shared codebase.

If you're curious, here's the full codebase.

Code quality with Claude Code

Claude Code got everything right on the first pass: updated requirements.txt, added the hashed_password column with properly hashed seed data, included migration logic for existing databases, introduced a namespaced auth_router, used OAuth2PasswordRequestForm for spec-compliant form handling, wrote 12 tests covering edge cases including token type misuse, and explicitly filtered the /me response to safe fields only.

Here is Claude Code's /me implementation:

@router.get("/me")

def me(current_user: dict = Depends(get_current_user)):

"""Return the profile of the currently authenticated user."""

email = current_user.get("sub")

user = get_user_by_email(email)

if not user:

raise HTTPException(status_code=status.HTTP_404_NOT_FOUND, detail="User not found")

return {

"id": user["id"],

"name": user["name"],

"email": user["email"],

"bio": user["bio"],

}The response is explicitly constructed from only the fields that should be visible to the client. No raw database row, no accidental leakage. It also handles the case where a valid token references a user that no longer exists, returning a proper HTTP 404 rather than a silent failure.

No iterations, no broken tests, no missing dependencies. Claude Code thinks about the entire system, not just the immediate task.

Full codebase: JWT Auth by Claude Code

Security awareness

The security gaps stem from the code quality issues, but a few are worth calling out explicitly.

Gemini set access token expiry to 30 minutes; Claude Code used 15 minutes. Both used seven days for refresh tokens and HS256 for signing. However, Gemini hardcoded SECRET_KEY as a constant in auth.py. Claude Code read it from the environment. A hardcoded secret is one of the most common and serious JWT vulnerabilities: anyone with repository access has the signing key and can forge valid tokens for any user.

As discussed previously, Gemini's /me endpoint returned hashed_password in the response. Claude Code returned only the fields a client should see. This doesn't show up in tests unless you explicitly test response shape, but it matters in production and should be rejected in any PR.

At the end of its run, Claude Code flagged the weak dummy passwords and placeholder secret key without being asked. Gemini raised nothing. Across all three articles in this series, Claude Code is the only tool that consistently treated security as something worth surfacing, rather than leaving it for the developer to catch.

Here's how their completion messages compared:

Workflow integration and day-to-day feel

Working with Gemini Code Assist feels like having a collaborator embedded directly in your editor. Its strength is tight IDE integration and quick, localized suggestions that complement your own edits. You stay in control, iterating step by step, accepting or tweaking suggestions as you go.

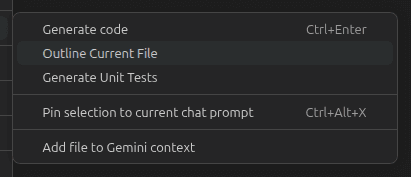

Code quality concerns aside, its VS Code integration and inline completions are mostly solid. Here's Gemini's context menu when you select a code snippet in the IDE:

That said, Gemini can lose broader context across multiple files, especially on tasks that span different parts of the codebase. The experience is closer to hands-on pair programming, where you're actively guiding the process throughout.

Claude Code feels more like delegating to a competent senior developer. It holds context across files with impressive consistency, understands how changes ripple through the system, and handles follow-up corrections without losing coherence. Rather than piecemeal suggestions, it thinks in complete solutions and gets most things right on the first pass.

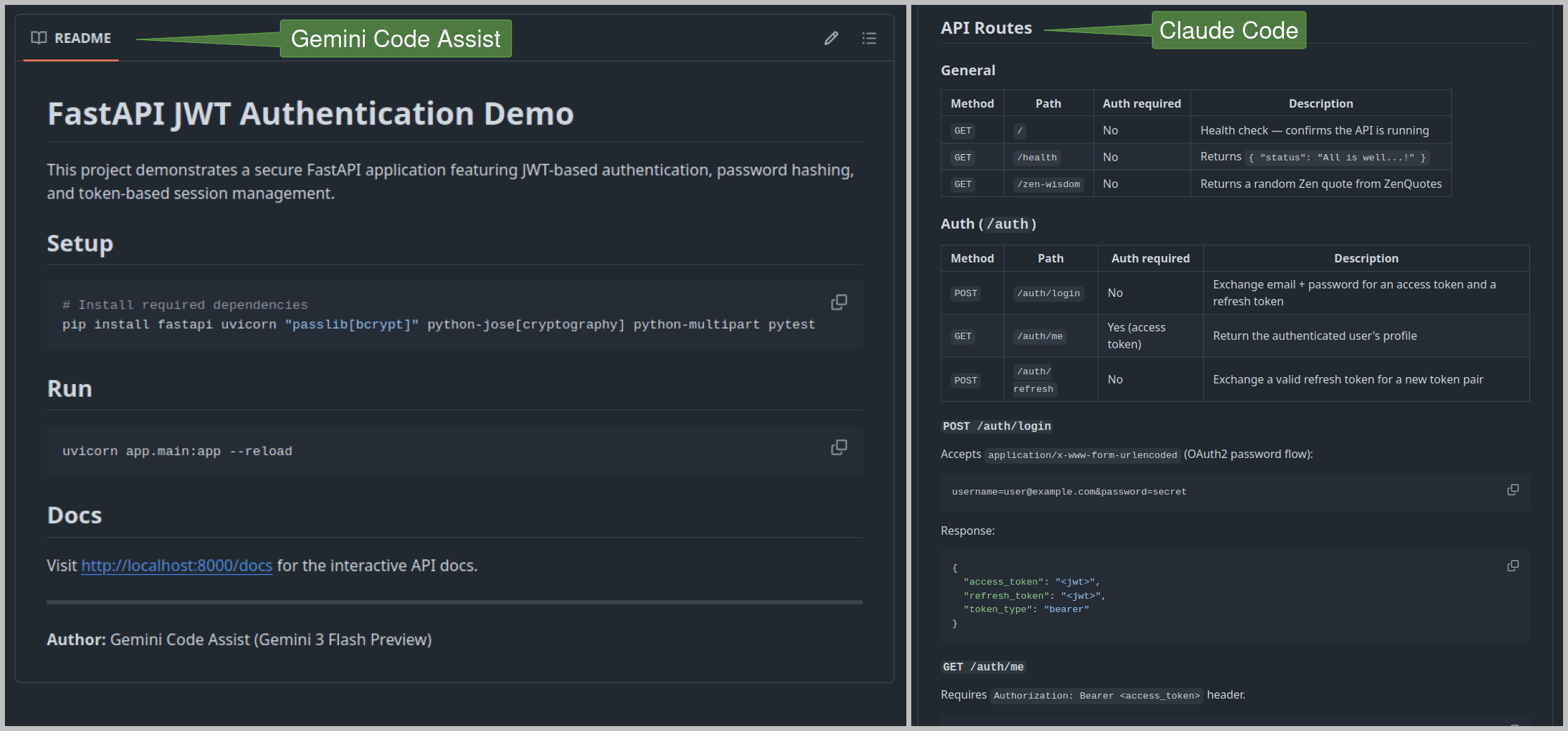

In practice, Gemini fits best when you want to stay closely involved in the implementation. Claude Code is better suited for tasks where you can clearly specify what you want and let it deliver a complete solution, documentation included. The README files each tool produced from the same prompt illustrate this well:

README files created by Gemini and Claude Code

Gemini's README was sparse. Even after three follow-up prompts to expand on implementation details and routes, it added only a brief sentence at the top, with no endpoint structure or usage documentation. Claude Code produced a thorough README from the start, covering API routes in detail, including auth requirements, request/response formats, and clear grouping under /auth.

You can compare them directly here: Gemini README versus Claude Code README.

Cost and accessibility

Gemini Code Assist has a clear pricing advantage: up to 180,000 code completions per month, plus free access to the Gemini CLI. As mentioned, Claude Code has no free tier. That gap directly affects how and where you can use these tools. For individual developers or small teams working within budget constraints, Gemini is the natural entry point; Claude Code falls into the "justify the cost first" category.

Claude Code's stronger system-level reasoning and more complete outputs do come at a price, both in subscription cost and token usage, which can run surprisingly high (as this analysis shows). The trade-off is straightforward: Gemini optimizes for accessibility, Claude Code optimizes for capability, and that capability carries a real cost.

When to reach for Gemini Code Assist or Claude Code

Reach for Gemini Code Assist when you're prototyping solo, exploring a new repo, or working on a weekend project where speed and zero cost matter more than production-readiness. Its free tier, excellent IDE integration, and quick turnaround make it a low-friction starting point for well-scoped tasks where you'll be reviewing and adjusting the output anyway.

Reach for Claude Code when the task has real consequences: refactoring a production auth flow, implementing a multi-file feature from scratch, or working in an unfamiliar codebase where missing context leads to missing migrations, broken tests, and password hashes leaking into API responses. The token cost is real, but so is the gap in output quality.

Used strategically, they can complement each other well: Gemini for quick orientation and low-stake explorations, Claude Code when getting it right on the first pass actually matters.

Gemini Code Assist and Claude Code comparison table

Criteria | Gemini Code Assist | Claude Code |

|---|---|---|

Model Used | Gemini 3 Flash (Preview) | Claude Sonnet 4.6 |

Task Time | ~6 minutes | Under 5 minutes |

Code Quality | Partially correct; missed migration, failing tests, incomplete setup | Correct, modular, production-ready on first pass |

Test Coverage | 4 tests, happy path & login failure | 12 tests, covers edge cases |

Docs Quality | Sparse README, lacks endpoint details | Detailed, well-structured README |

Token Usage and Cost | Low cost, generous free tier | Higher cost, heavy token usage |

Best For | Incremental edits, inline assistance | Complex, large codebase, maintainable projects |

These observations are grounded in this specific task and setup. In practice, both Gemini and Claude Code can behave quite differently depending on the codebase, tech stack, and your prompts.

The real-world reality of AI coding assistants

Gemini Code Assist and Claude Code are useful in genuinely different ways. Gemini is the tool you reach for when accessibility matters: zero cost to start, IDE-native, and fast enough for exploratory work and incremental edits. Claude Code is the tool you reach for when the work itself matters: multi-file implementations, production-bound codebases, and tasks where getting it wrong has real consequences. The hashed_password leak, the broken tests, the missing migration, none of these are catastrophic in isolation, but together they paint a picture of a tool that executes without fully reasoning.

Nevertheless, no tool should replace your engineering judgment. JWT authentication is exactly the kind of code that can look correct while hiding subtle flaws. Whatever AI assistant you're using, treat its output as a first draft: review the diffs, run the tests, and understand what's being generated before you ship it.

One thing that tightens the loop on both tools for auth work is giving them accurate product context to reason from. The Descope Docs MCP Server is a hosted MCP server that gives Claude Code and Gemini Code Assist direct access to Descope's documentation from inside your IDE, so the assistant can reference how to configure capabilities like session management, refresh token rotation, or MCP authorization.

Building auth with Descope is already simpler than coding from scratch, and adding the Descope Docs MCP Server only makes it easier. If you're planning on using a coding companion, output quality will improve noticeably when the agent has the right docs in front of it. Sign up for a free Descope account and join our developer community to continue your agentic auth journey.

For more developer guides, subscribe to the Descope blog or follow us on LinkedIn, X, and Bluesky.