Table of Contents

Why agentic identity needs its own model

An agentic identity represents a software agent that can act autonomously or on behalf of a user or tenant. While conventional machine identities are often broad and long-lived, properly secured agentic identities use ephemeral credentials, are scoped to specific tools or actions, and are tied to a delegating user whose consent governs what the agent is permitted to do.

Agentic identities are designed for runtime decision-making systems that require:

Non-interactive and machine-to-machine authentication

Fine-grained, capability-based authorization

Just-in-time creation and decommissioning

Short-lived credentials by default

Explicit delegation and traceability

The term “agentic identity” has emerged alongside the rapid growth of agentic AI itself. However, a lasting, universally agreed-upon definition is still materializing, largely due to the early state of agentic infrastructure. An AI agent could, for example, use an API key instead of OAuth credentials, but this wouldn’t be treating the agent as a first-class identity.

This post examines why AI agents need their own identity model, what makes agentic identities structurally different from traditional credentials, and how they apply to both developers building agent-ready applications and developers building the agents themselves.

Why agentic identity needs its own model

As AI agents move from experimental pilots to production deployments, the behavioral gap between traditional machine identity and agentic identity manifests as practical implementation hurdles. A Descope survey of 400+ identity decision-makers found that while 88% of organizations were using or planning to use AI agents, only 37% had progressed past the pilot stage. Identity challenges are a primary cause, as most organizations struggle to properly classify and control AI agents.

Traditional identity systems were designed for two kinds of entities: humans who authenticate interactively, and non-human identities (NHIs) like service accounts, API clients, and workloads that follow deterministic patterns. AI agents don’t fit neatly into either category (though they’re typically placed in the latter).

| Human identities | Traditional NHIs (devices, workloads) | Agentic identities |

|---|---|---|---|

Behavior | Interactive, UI-driven | Static and deterministic | Dynamic and non-deterministic |

Auth patterns | Knowledge, possession, and inherence-based auth; MFA | OAuth tokens, API keys, M2M flows, certificates | Delegated access, consent flows, MCP |

Scale | Bounded by headcount | Bounded by infrastructure | Autonomous or semi-autonomous at scale |

Access model | Role and group-based | Static scopes and permissions | Function and tool-level access |

Unlike human users, most agents don’t interact through UIs, can’t respond to browser-based MFA prompts, and operate continuously until their task is done rather than in discrete sessions. And unlike traditional NHIs, agents are non-deterministic: they decide which tools to call, APIs to access, and actions to take based on context rather than a fixed policy or script.

While a microservice (i.e., a workload NHI) calls the same endpoint with the same credentials in the same auth pattern, an agent might query a database, draft an email, schedule a meeting, and submit a ticket all in a single workflow. Each of these actions requires different permissions on varying systems with distinct auth requirements.

Human-centric credentials (SSO sessions, passwords) give agents dangerously broad permissions with limited revocability. Static machine credentials (long-lived API keys, service accounts) lack the granularity and auditability that agentic systems need. Meanwhile, agents present a novel risk surface packed with vulnerabilities like prompt injection and tool poisoning.

Security considerations for agentic identity

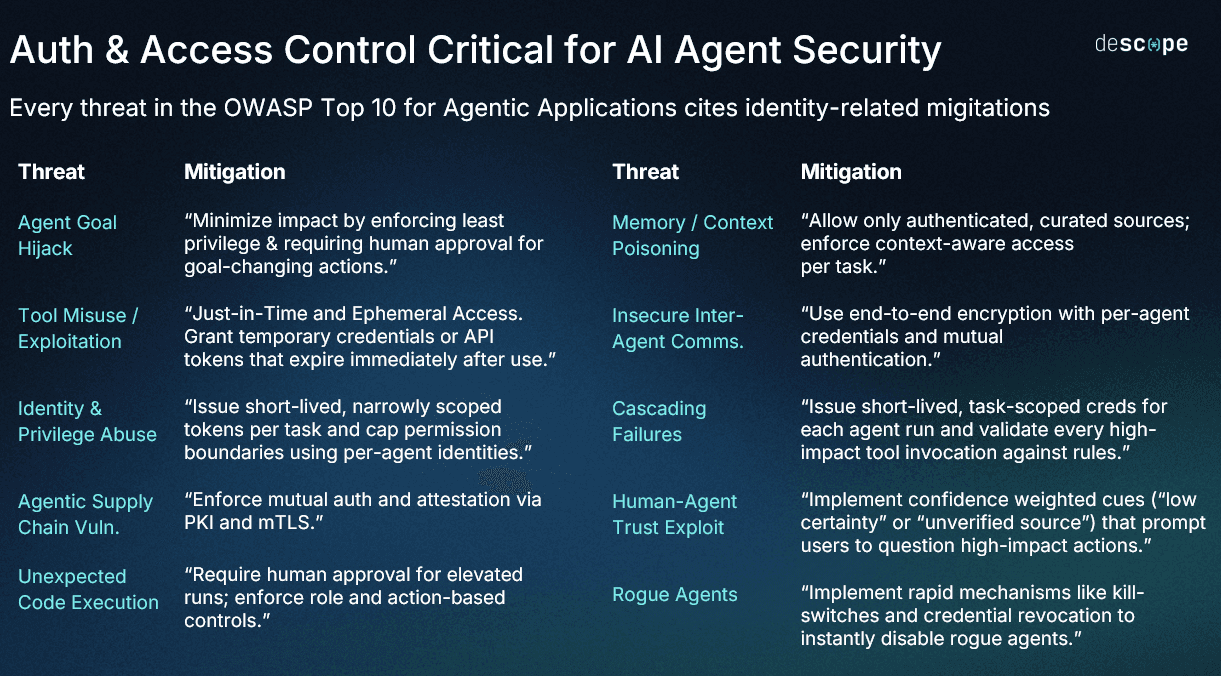

The OWASP Top 10 for Agentic Applications formalizes the security risks that agentic AI systems face. Agents can be manipulated through prompt injection, be persuaded to misuse the tools they’re given, and inherit permissions in ways that expand the blast radius quickly. What’s most noteworthy about this list, however, is that every threat within it has at least one identity-related mitigation.

For example:

Agent goal hijack is an attack in which agent behavior is redirected through poisoned inputs. It’s mitigated by enforcing least privilege and requiring human approval for goal-changing actions.

Tool misuse and exploitation is where agents misuse legitimate tools due to over-privileged access. The mitigation calls for just-in-time and ephemeral access with credentials that expire immediately after use.

Identity and privilege abuse, where attackers exploit delegation chains to escalate access, is addressed by issuing short-lived, narrowly scoped tokens per task with per-agent identities.

Rogue agents, or agents that drift from their intended purpose without external manipulation, require rapid credential revocation to instantly disable them.

The pattern here is consistently pointing at agent-specific identity controls: scoped credentials, per-agent identities, consent flows, delegation chain management, and revocation mechanisms. Understanding agentic identities as their own first-class category is justified by both the security considerations and by the behavioral differences that separate them from traditional NHIs.

What makes managing agentic identities different

When managed properly, three properties emerge that separate agentic identities from traditional NHIs: ephemeral credentials, granular delegated access, and strong protocol compliance.

Ephemeral credentials

AI agents operate at a velocity and scale that amplifies the blast radius of any compromised credential. An agent with a long-lived API key and broad permissions can interact with hundreds of systems in its everyday tasks, so a single leaked key means far-reaching and difficult-to-contain exposure.

Managing agentic identities properly means issuing credentials that are short-lived, scoped to specific actions or sessions, and revocable at any point. Rather than a static key that the agent holds indefinitely, a JWT is issued with a narrow expiration window, audience-restricted to the specific resource being accessed, and tied to the specific user on whose behalf the agent is acting. When the task completes, the credential expires.

Granular delegated access

Most agent actions are performed on behalf of a user. Unlike a conventional client credentials flow where a service authenticates as itself with no user context, managing agentic identities means binding the agent to a specific user’s authorization. The agent accesses only what that user has consented to, for the scopes that were granted, and only for the duration that consent is valid.

This binding is typically initiated through an Authorization Code Flow with PKCE (Proof Key for Code Exchange), where the user authenticates and approves the requested scopes before the agent receives a token. A delegation chain is maintained (who authorized the agent, what scopes, for which resources, for how long), and the OAuth token exchange’s actor (`act`) claim captures this relationship so downstream services can distinguish the user’s identity (subject claim, or `sub`) from the agent making the call.

Strong protocol compliance

Managing agentic identities doesn’t require brand-new protocols, but it does demand compliant implementation of established standards and methods (OAuth 2.1, DCR, CIMD, CIBA). The Model Context Protocol (MCP) auth spec specifies OAuth 2.1 for tool access, defines patterns for scope-based permissions, and outlines client registration models. However, the specification is actively evolving, and each revision can introduce new auth requirements. For organizations deploying MCP servers or agents, that means understanding the underlying protocols (and implementing them properly) remains a meaningful, ongoing engineering burden.

For a broader look at the identity mechanisms used in machine-to-machine and agentic scenarios, including the client credentials flow, OAuth token exchange, and adjacent patterns, see What is a Non-Human Identity (NHI)?

Agentic identity best practices

Implementing agentic identity in practical scenarios surfaces two distinct problem sets depending on what you’re building: an MCP server that needs to securely connect with AI agents, or an AI agent that needs to connect with external services or backend APIs on behalf of users.

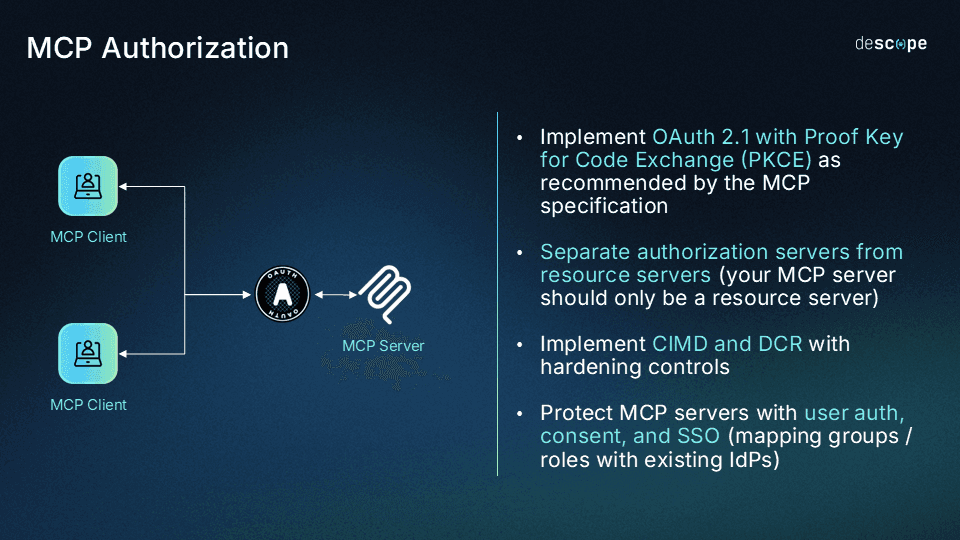

Building secure MCP servers

Organizations tackling an AI project want to open up their applications and data via MCP servers, which enable users to interact with their products using LLMs or AI agents. The problem is that the MCP authorization specification is complex, actively evolving, and requires real identity expertise to implement properly. Incorrectly configured MCP servers are vulnerable to identity-based exploits, and research has uncovered nearly 2000 MCP servers on the open internet with no meaningful security at all.

Separate the authorization server from the resource server: The MCP auth spec now classifies MCP servers as OAuth Resource Servers, completely separate from dedicated authorization servers. Conflating the two means maintaining OAuth infrastructure alongside application logic, making auditing painful and compliance harder to sustain as the spec evolves.

Implement OAuth 2.1 and PKCE properly: OAuth 2.1 is the spec-mandated standard for HTTP-based MCP support, and PKCE is required for all public clients. Use Resource Indicators to bind tokens to specific MCP servers to prevent token reuse across different servers.

Build consent management into the flow: Before an MCP client can act, users should see a clear consent screen describing which tools are accessible, what data may be read or written, and for how long. Consent should be time-bound and scoped to the task.

Enforce scope-based access control at the tool level: An agent that can read calendar events should not automatically be able to write CRM records. Define per-tool scopes and centralize policy enforcement so access decisions remain contextual and consistent across agents, servers, and tools.

Audit everything: Each MCP client should carry a dedicated identity tied to the user it acts on behalf of. Log every registration (which means supporting DCR, CIMD, and manual registration properly), consent grant, tool invocation, and token exchange. If you have existing auditing infrastructure, like a SIEM platform, integrate it with your audit event streaming.

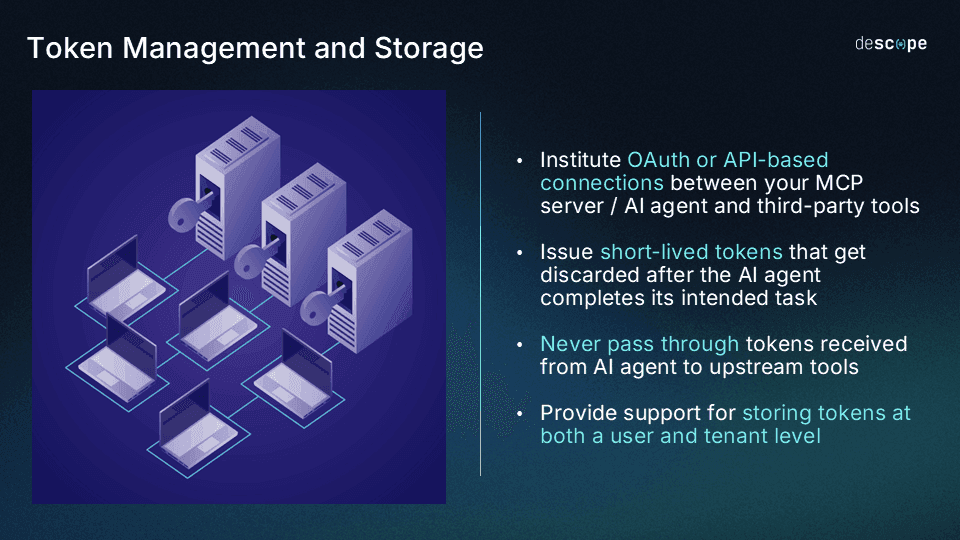

Building production-ready AI agents

AI agent developers stand at the opposite end of this equation. Their agents need to take actions across third-party services (e.g. Slack, Google Calendar, HubSpot, Shopify, CRMs, databases, other MCP servers) on behalf of their users. Each connection requires its own OAuth token exchange, scopes, rotation cadence, and secure storage. Agents asking users for their credentials (and using them) is a security non-starter that obscures agentic behavior and eliminates the ability to audit what agents are doing on their own.

Never share or store user credentials in the agent: Use OAuth-based delegation so the agent receives scoped tokens rather than raw credentials (passwords, API keys). The agent should never be in possession of the user’s actual credentials.

Use a credential vault for downstream connections: Rather than managing token lifecycle per-integration, delegate storage, refresh, rotation, and revocation to a purpose-built credential vault. This limits the blast radius if the agent is ever compromised.

Request minimum viable scopes: Only request the permissions the agent needs for the current task. Use progressive scoping to request elevated access only when the task demands it, rather than requesting broad access up front.

Bind every action to a delegating user: Ensure the agent’s tokens carry the identity of the user who authorized the action. Downstream services should be able to verify both who the agent is and on whose behalf it is acting.

Log every downstream action with user context: Every tool call and token exchange should be logged with the delegating user’s identity, the scopes used, and the resource accessed. This lets security teams trace which user authorized which action, and revoke access when needed.

Consistent governance for agentic identity

Agentic identities are a distinct and particularly difficult-to-manage type of non-human identity, shaped more by the requirements demanded by non-deterministic behaviors than any intrinsic characteristics. AI agents don’t fit the patterns designed for human users or traditional machine workloads, but that doesn’t mean they’re impossible to govern.

Managing them properly means issuing ephemeral credentials, enforcing granular delegated access, and maintaining strong protocol compliance rather than jury-rigging conventional or legacy identity systems to a use case they weren’t meant for. To learn how Descope helps govern agentic identities, explore the Agentic Identity Hub, join the AuthTown dev community, and start using Descope now with a Free Forever account.